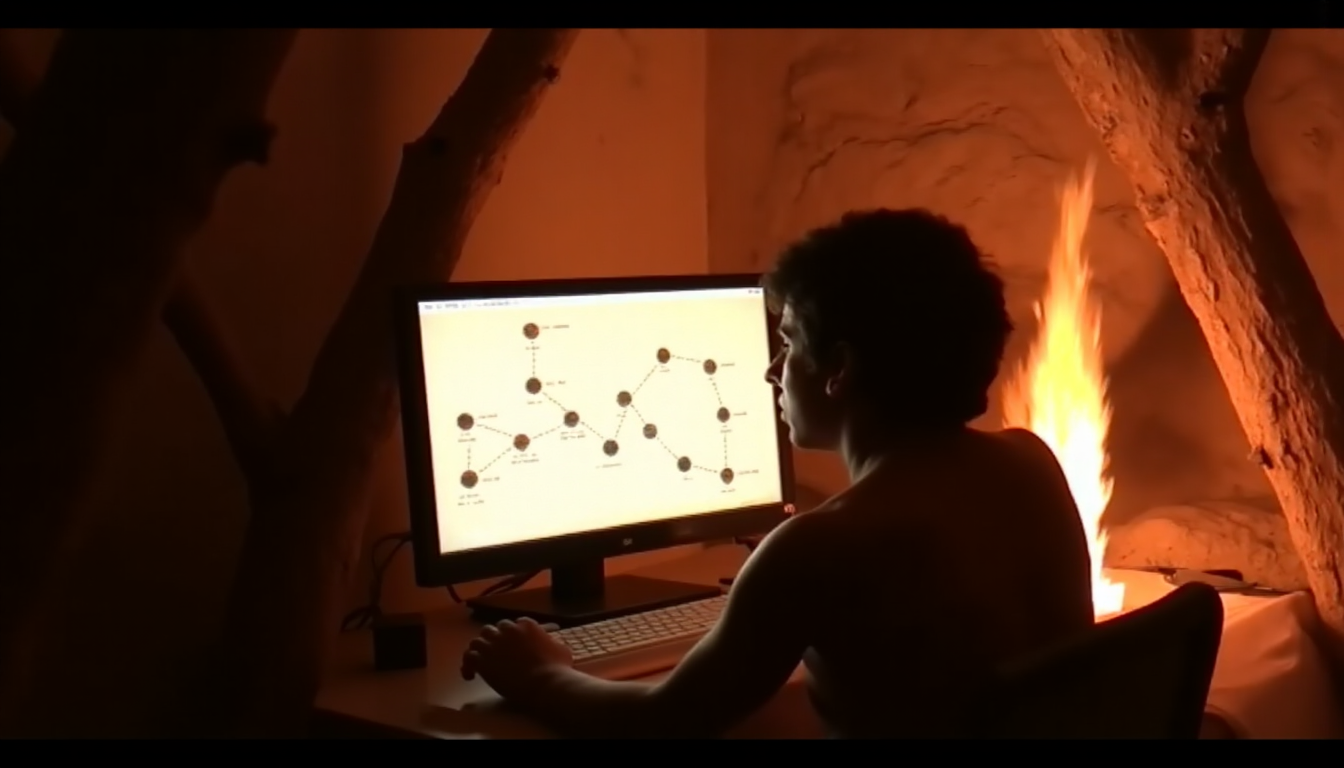

A skill for Claude Code dropped on HN this week and hit 834 points. Called “Caveman.” It makes the model talk like a caveman: drops articles, drops filler, drops hedging. Claims 75% token reduction while keeping 100% technical accuracy.

348 comments. Half the people are posting GIFs. The other half are arguing about whether removing “the” from LLM outputs constitutes a cognitive impairment for the model. One person quoted Kevin Malone from The Office.

Nobody noticed the actual story.

The Memo Finally Got Through

For three years the LLM industry operated on a simple premise: more tokens, more compute, better model. Scale was the moat. Context windows ballooned from 4K to 1M. Parameter counts went vertical. Anthropic, OpenAI, Google — all playing the same game of “our model is bigger.”

Then the bills came due.

Caveman is a symptom. Not a cause. The cause is that inference costs are a P&L problem and the people running the numbers finally convinced the people running the product that verbosity is a feature nobody asked for and everyone pays for.

The math is not subtle. GPT-4o generates roughly 3 tokens per word of output in typical conversational use. A 500-word response is 650 tokens, at roughly $0.01 per 1K tokens on the cheap end. That’s $0.0065 per reply. Sounds fine. Until you’re running 10 million conversations a day. Then it’s $65,000 daily. Then it’s $23 million a year — for text nobody reads.

The Caveman project author benchmarked specific tasks. Explain a React re-render bug: 1180 tokens normally, 159 tokens in Caveman mode. That’s 87% reduction on the output side. The model still “thinks” the same amount. But the user gets a pager instead of a whitepaper.

The paper they cite — “Brevity Constraints Reverse Performance Hierarchies in Language Models” from March 2026 — found that brief responses can actually improve accuracy by up to 26 percentage points on certain tasks. Not degrade. Improve. The hypothesis is that concision forces the model to skip the confident-wrong path and get to the actual answer faster.

The Open Source Efficiency Wave

DeepSeek V4 is supposed to drop this month. The OSINT on it has been circulating for weeks: 1 trillion parameters, 1 million token context, native multimodal, 83.7% on SWE-bench Verified. If the benchmark numbers hold, that’s within striking distance of Claude Opus 4.5’s 80.9% — and it runs on open weights.

Here’s the part nobody in the Western press has written about: DeepSeek had to pivot their entire training infrastructure mid-run. The original plan was Huawei Ascend 910B chips. Those failed during training. They had to switch to NVIDIA GPUs. This is the same company that shocked the industry with V3’s Mixture of Experts architecture — getting GPT-4-class performance out of a fraction of the training cost.

The pattern is consistent. DeepSeek builds efficient. Not because they’re benevolent. Because they had no choice. Export controls cut them off from the latest NVIDIA hardware. So they figured out how to do more with less. And it worked.

Compare that to the American labs burning through H100 clusters like they’re printing money. GPT-5.3 dropped in March with 1M token context and “computer use” baked in. Impressive. Also costs what it costs. The inference margin on that model is not great.

The iPhone Moment

Gemma 4 running on iPhone is the other story that dropped this week. Not through some cloud API — actually on-device. Through Google’s AI Edge Gallery app, iPhone users can run a 4B parameter Gemma model locally. No latency. No API costs. No data leaving the device.

This is not new in concept. Apple’s Neural Engine has been running on-device inference since the A12 chip in 2018. What’s new is that the model is competent. Not demo-ware. Not toy. The kind of model you could actually use for a real task and get a real result.

4B parameters sounds small next to GPT-4’s rumored 1.7T. But it doesn’t need to be GPT-4. It needs to be good enough for the 80% of tasks that don’t require frontier-level reasoning. And it runs on a device that fits in your pocket.

This Is the Mainframe-to-PC Shift

Let me tell you something about computing cycles. Every 10 to 15 years, the industry goes through the same argument.

In the 60s and 70s, computing was centralized. Mainframes in climate-controlled rooms. Programmers submitted batch jobs and waited. The assumption was that serious computation required serious infrastructure.

Then the microprocessor got cheap enough. The Apple II and IBM PC happened. And suddenly the guy in the garage had more computing power than the university’s mainframe did a decade earlier. The mainframes didn’t disappear — they got faster too — but the center of gravity shifted.

The 90s gave us the web as a client-server phenomenon. The 2000s gave us mobile — computing moved from the desk to the pocket. The 2010s gave us cloud — but cloud is really just mainframes with better networking. You rent time on someone else’s iron.

Now local models are the new microprocessors.

Gemma 4 on iPhone. Mistral running on MacBooks with MLX. DeepSeek V3’s 236B MoE parameters fitting into what people actually use. The efficiency curve is bending fast.

What Changes

Not everything. The frontier labs will keep training bigger models for tasks that actually need them. Protein folding, climate modeling, formal verification — these need every floating point operation we can muster.

But the day-to-day of software development, the stuff that fills 90% of developer hours? That’s being commoditized by efficiency. A 4B parameter model that fits in RAM on a consumer laptop and runs at 30 tokens per second doesn’t need a GPU cluster. It doesn’t need an API key. It doesn’t send your code to a third party.

Caveman hit 834 points on HN. The top comment was someone saying “this is what Grug Brain was always about.” Grug Brain — the fictional caveman developer whose philosophy was “keep it simple, avoid unnecessary complexity.” The character was created as a parody of enterprise software culture. Turns out it’s also a valid strategy for LLM prompting.

The efficiency decade isn’t coming. It’s here. The question is whether the companies built on the assumption of infinite compute will figure that out before someone who runs on 4 watts proves they didn’t need to.